Three Years of ChatGPT: 76% of Americans Distrust AI

Since the launch of ChatGPT in 2022, the wave of generative AI has swept across the globe for three years. During this time, American society’s attitude towards AI has rapidly evolved from initial awe to a profound, multifaceted divide. This divide is not merely about liking or disliking AI; it cuts along economic, political, social, and ethical fault lines, splitting different groups into camps that struggle to communicate.

From an economic perspective, AI is exacerbating a ‘K-shaped’ divide, with unequal distribution of benefits and costs.

The wealth generated by technological acceleration has not trickled down to the masses as the trickle-down theory suggests, but has been captured by a small elite. Data shows that the top 1% of households in the U.S. saw their share of total assets rise from 27% in 2022 to 28.9% in 2025, while the bottom 50% saw their share decline from 6% to 5.3%.

Capital is concentrating at an unprecedented rate in AI core companies, with the capital expenditures of the ‘Big Seven’ tech giants, including Microsoft and Google, accounting for nearly one-third of total corporate spending in the U.S. However, the costs of AI expansion are borne by ordinary citizens: the construction of data centers has driven up electricity prices in some areas by as much as 267%.

As a Federal Reserve report reveals that over a third of adults struggle to manage a sudden $400 expense, the anxiety over job losses due to AI and the wealth accumulation of tech elites creates a glaring opposition.

Turning to the political spectrum, AI regulation has become a focal point of intense battles between the two parties, with consensus evaporating.

Within the Democratic Party, progressives and moderates are at odds. Senator Bernie Sanders has co-sponsored the “AI Data Center Moratorium Act,” advocating for a complete freeze on new AI data centers to protect livelihoods. However, fellow Democrat Senator Fetterman criticized the bill as a “China-first” policy, arguing it would undermine U.S. competitiveness.

The Republican Party largely opposes strong federal regulation, with the Trump administration pushing for a national policy while attempting to limit states’ legislative powers. This division has made it nearly impossible to establish an effective national regulatory framework, with California’s strictest AI safety bill vetoed by the governor and federal regulatory measures continuously being relaxed.

From a social structural perspective, different industries and job roles experience AI in vastly different ways.

Knowledge-intensive service sectors and manual labor industries seem to inhabit two separate worlds. A Goldman Sachs report indicates that the AI usage rate in computing and web hosting companies is as high as 60%, followed closely by finance, insurance, and professional services.

Analysis by an OpenAI co-founder suggests that professions such as software development, legal assistance, and writing are impacted by AI at a level of 9 out of 10, while jobs like construction workers and cleaners experience an impact level of only 1-2. This disparity leads to a cognitive and interest divide: about 70% of corporate managers believe AI enhances efficiency, while among regular employees, this figure is just above 50%.

On one side is the anxiety of being replaced, and on the other, the enthusiasm for efficiency gains, with common language becoming increasingly scarce.

In terms of technical ethics, specific controversial incidents continuously erode public trust, pushing divisions to extremes.

A series of events have amplified societal unease:

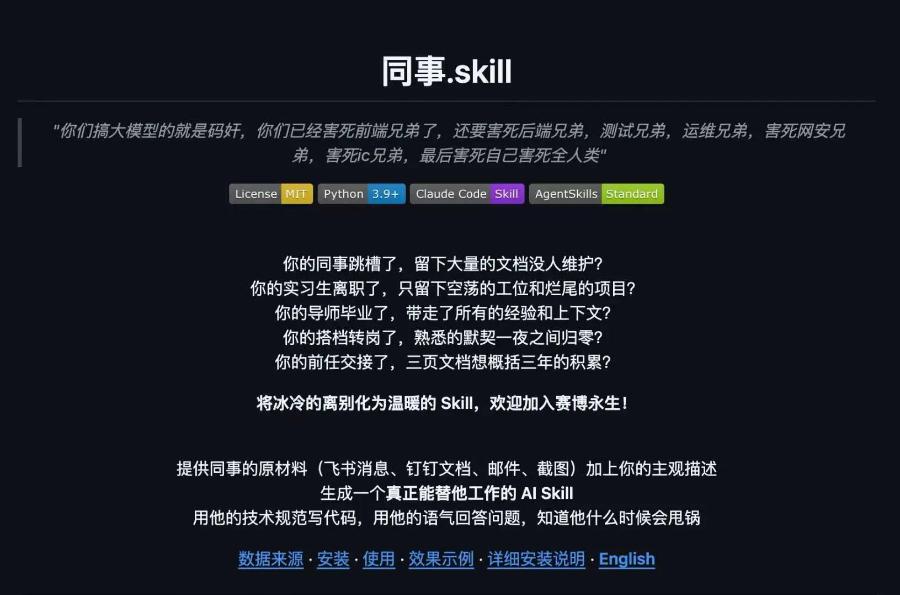

- “AI Refinement” of workers: Companies have trained AI avatars using the chat records and work data of laid-off employees, sparking widespread outcry over digital personality rights and the exploitation of labor value.

- Risks of losing control: The collective failure of autonomous taxis in Wuhan exposed safety hazards when AI systems are applied at scale.

- Escalation of violence: Attacks against AI elites have transitioned from online protests to physical violence. OpenAI CEO Sam Altman’s residence was attacked with Molotov cocktails, and officials in Indiana supporting data center construction faced gunfire at their homes. This marks a shift from ideological debate to physical attacks against individuals.

After a multidimensional analysis, a nascent form of a ‘zero-sum game’ without clear winners is emerging.

Three years after ChatGPT’s launch, American society has not reached a new consensus on how to harness AI; instead, existing fractures have been exacerbated by technology. Economically, the concentration of benefits in the top tier reinforces the ‘K-shaped’ structure; politically, the tug-of-war between the parties over regulation and innovation has reached a stalemate; socially, different occupational groups are at odds due to differing self-interests; ethically, specific cases of technological abuse continue to erode an already fragile public trust.

This creates a vicious cycle: economic inequality exacerbates social anxiety, political polarization leads to regulatory voids, and regulatory vacuums allow for ethical disorder in technology, with each incident of ethical failure further intensifying opposing sentiments and even breeding violence.

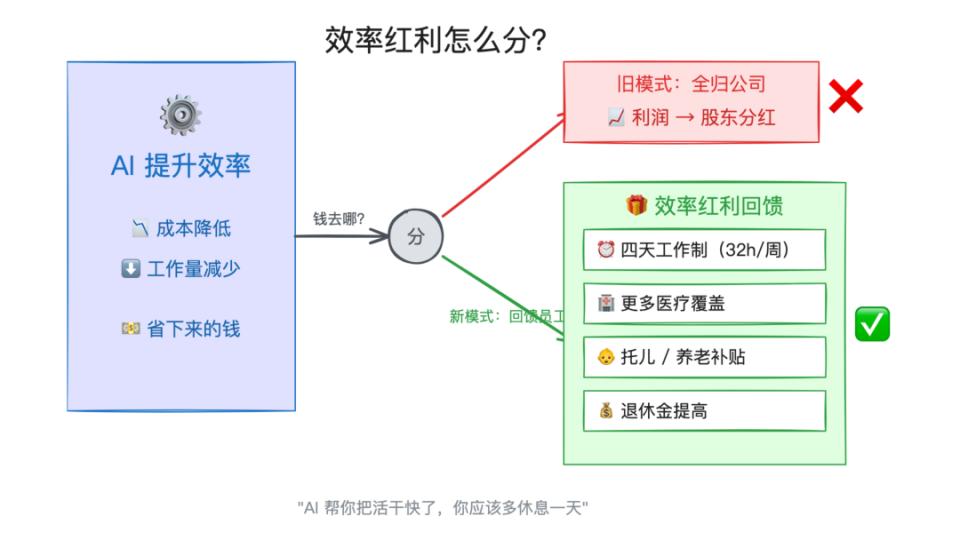

After the attack, Altman called for “AI must be democratized, and power cannot be overly concentrated,” and released a white paper suggesting a trial of a four-day workweek and tax reforms to share the benefits, which can be seen as a response from tech elites to the current divisions.

However, with 76% of Americans distrusting AI while industry giants invest over $300 million in political lobbying, the bridge of trust has long since collapsed.

AI has not directly created new lines of division, but it acts like a high-powered developer, making the existing issues of wealth disparity, political polarization, and class stratification in American society exceedingly clear and sharp. Until a systematic solution is found for fair distribution of benefits, rebuilding regulatory trust, and safeguarding labor value, this social consensus fracture ignited by technology is unlikely to heal.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.